GradientPolicy is a SoftMax type algorithm, based on Sutton & Barton (2018).

Usage

policy <- GradientPolicy(alpha = 0.1)

Arguments

alpha = 0.1double, temperature parameter alpha specifies how many arms we can explore. When alpha is high, all arms are explored equally, when alpha is low, arms offering higher rewards will be chosen.

Methods

new(epsilon = 0.1)Generates a new GradientPolicy object. Arguments are defined in

the Argument section above.

set_parameters()each policy needs to assign the parameters it wants to keep track of

to list self$theta_to_arms that has to be defined in set_parameters()'s body.

The parameters defined here can later be accessed by arm index in the following way:

theta[[index_of_arm]]$parameter_name

get_action(context)here, a policy decides which arm to choose, based on the current values of its parameters and, potentially, the current context.

set_reward(reward, context)in set_reward(reward, context), a policy updates its parameter values

based on the reward received, and, potentially, the current context.

References

Kuleshov, V., & Precup, D. (2014). Algorithms for multi-armed bandit problems. arXiv preprint arXiv:1402.6028.

Cesa-Bianchi, N., Gentile, C., Lugosi, G., & Neu, G. (2017). Boltzmann exploration done right. In Advances in Neural Information Processing Systems (pp. 6284-6293).

See also

Core contextual classes: Bandit, Policy, Simulator,

Agent, History, Plot

Bandit subclass examples: BasicBernoulliBandit, ContextualLogitBandit,

OfflineReplayEvaluatorBandit

Policy subclass examples: EpsilonGreedyPolicy, ContextualLinTSPolicy

Examples

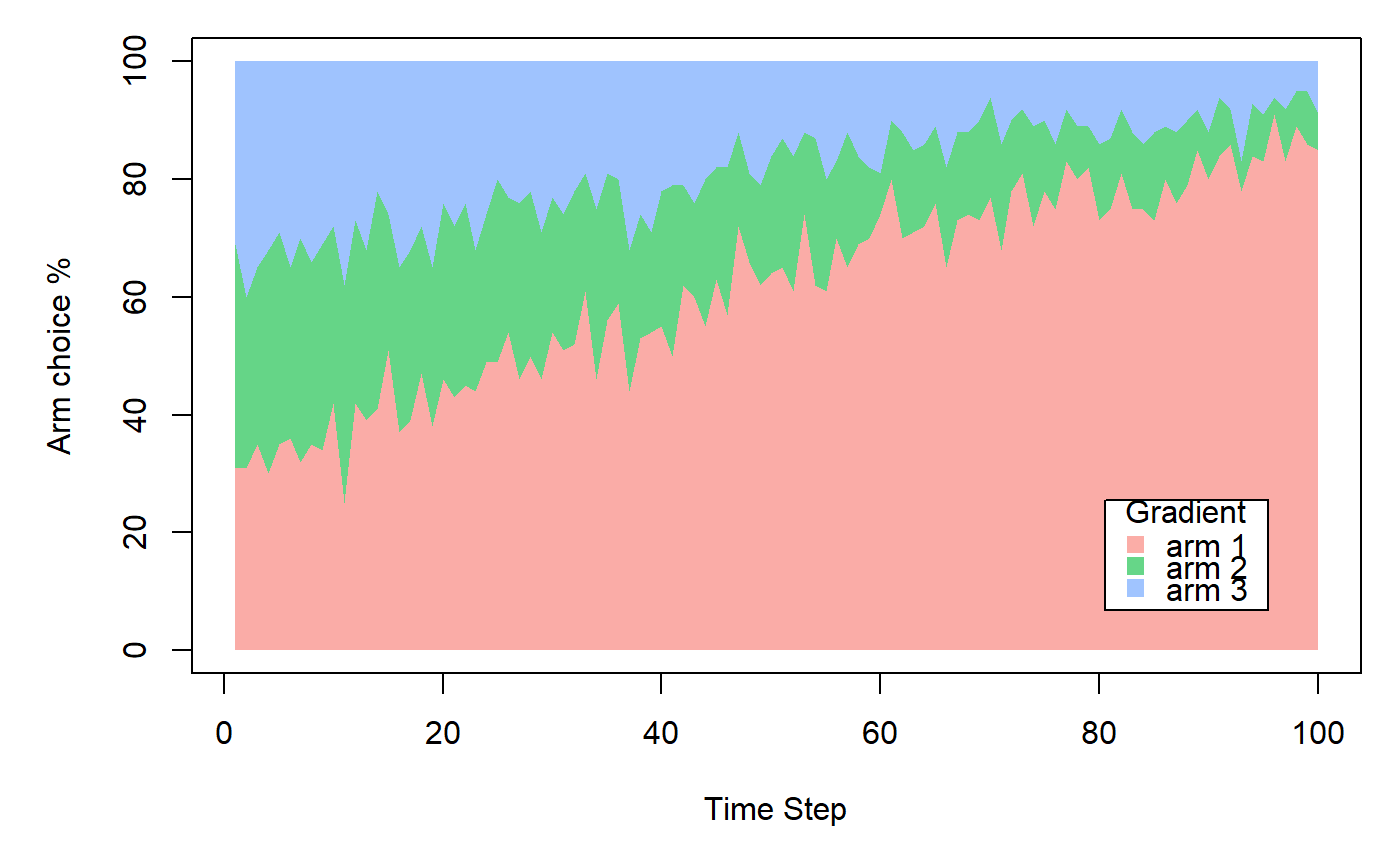

horizon <- 100L simulations <- 100L weights <- c(0.9, 0.1, 0.1) policy <- GradientPolicy$new(alpha = 0.1) bandit <- BasicBernoulliBandit$new(weights = weights) agent <- Agent$new(policy, bandit) history <- Simulator$new(agent, horizon, simulations, do_parallel = FALSE)$run()#>#>#>#>#>#>#>