EpsilonGreedyPolicy chooses an arm at

random (explores) with probability epsilon, otherwise it

greedily chooses (exploits) the arm with the highest estimated

reward.

Usage

policy <- EpsilonGreedyPolicy(epsilon = 0.1)

Arguments

epsilonnumeric; value in the closed interval (0,1] indicating the probablilty with which

arms are selected at random (explored).

Otherwise, EpsilonGreedyPolicy chooses the best arm (exploits)

with a probability of 1 - epsilon

namecharacter string specifying this policy. name

is, among others, saved to the History log and displayed in summaries and plots.

Methods

new(epsilon = 0.1)Generates a new EpsilonGreedyPolicy object. Arguments are

defined in the Argument section above.

set_parameters()each policy needs to assign the parameters it wants to keep track of

to list self$theta_to_arms that has to be defined in set_parameters()'s body.

The parameters defined here can later be accessed by arm index in the following way:

theta[[index_of_arm]]$parameter_name

get_action(context)here, a policy decides which arm to choose, based on the current values of its parameters and, potentially, the current context.

set_reward(reward, context)in set_reward(reward, context), a policy updates its parameter values

based on the reward received, and, potentially, the current context.

References

Gittins, J., Glazebrook, K., & Weber, R. (2011). Multi-armed bandit allocation indices. John Wiley & Sons. (Original work published 1989)

Sutton, R. S. (1996). Generalization in reinforcement learning: Successful examples using sparse coarse coding. In Advances in neural information processing systems (pp. 1038-1044).

Strehl, A., & Littman, M. (2004). Exploration via model based interval estimation. In International Conference on Machine Learning, number Icml.

Yue, Y., Broder, J., Kleinberg, R., & Joachims, T. (2012). The k-armed dueling bandits problem. Journal of Computer and System Sciences, 78(5), 1538-1556.

See also

Core contextual classes: Bandit, Policy, Simulator,

Agent, History, Plot

Bandit subclass examples: BasicBernoulliBandit, ContextualLogitBandit,

OfflineReplayEvaluatorBandit

Policy subclass examples: EpsilonGreedyPolicy, ContextualLinTSPolicy

Examples

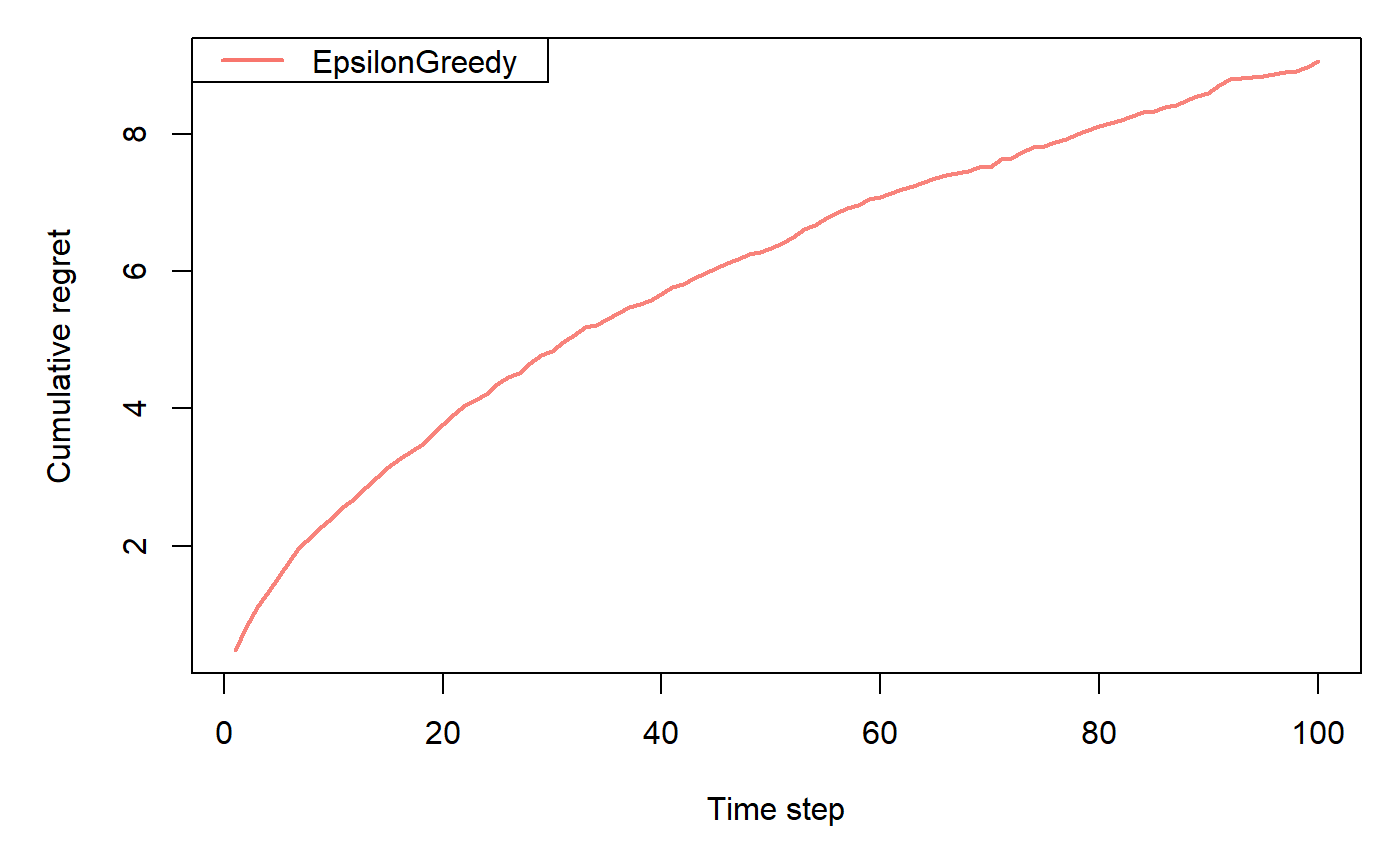

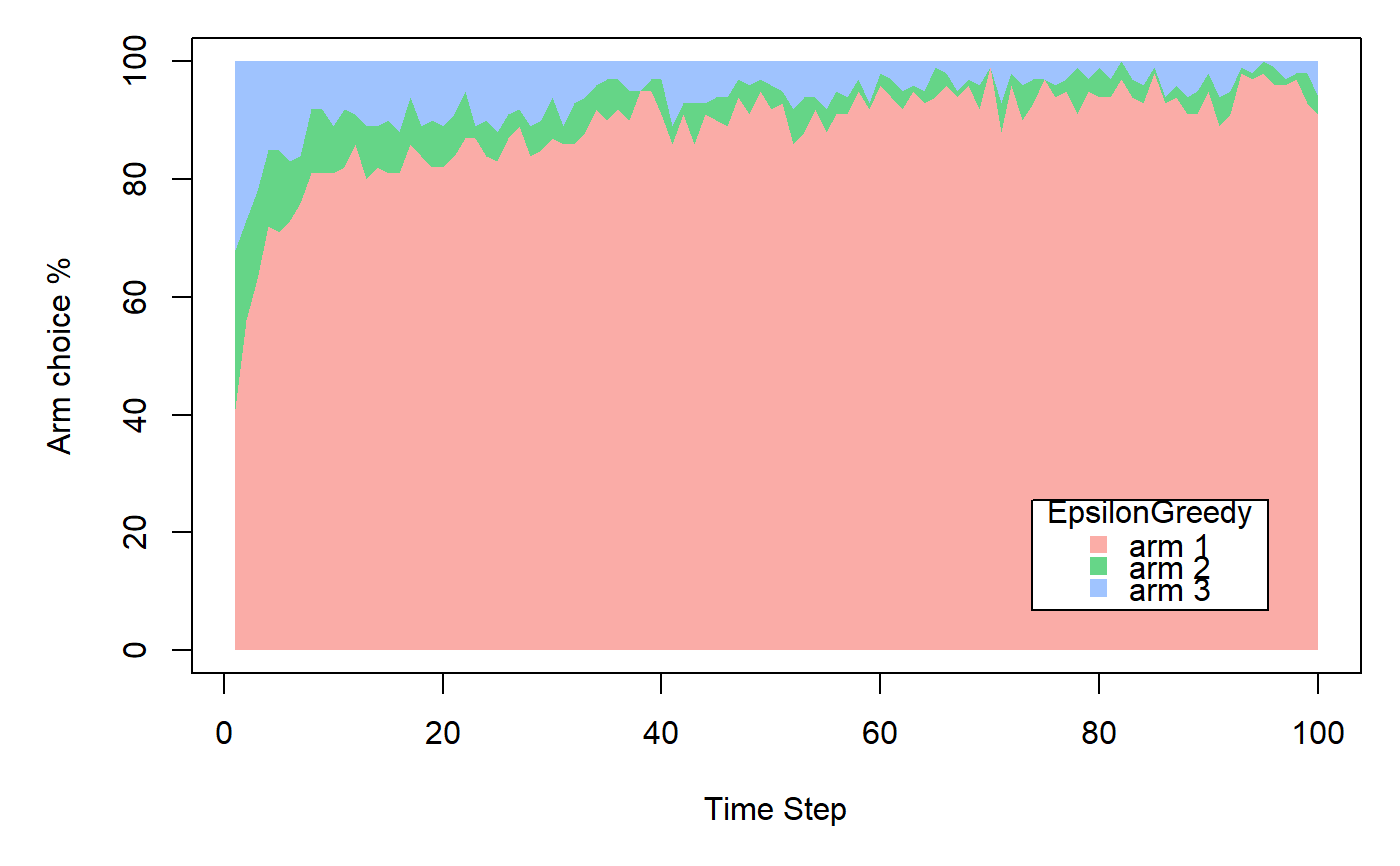

horizon <- 100L simulations <- 100L weights <- c(0.9, 0.1, 0.1) policy <- EpsilonGreedyPolicy$new(epsilon = 0.1) bandit <- BasicBernoulliBandit$new(weights = weights) agent <- Agent$new(policy, bandit) history <- Simulator$new(agent, horizon, simulations, do_parallel = FALSE)$run()#>#>#>#>#>#>#>